🎧 Podcast version available Listen on Spotify →

Most decisions are bad for the same reason.

One perspective. One set of assumptions. One framework baked in from the start. Nobody pushing back hard enough. Everyone optimizing for consensus instead of truth.

I wanted to fix that. So I built a system that forces disagreement.

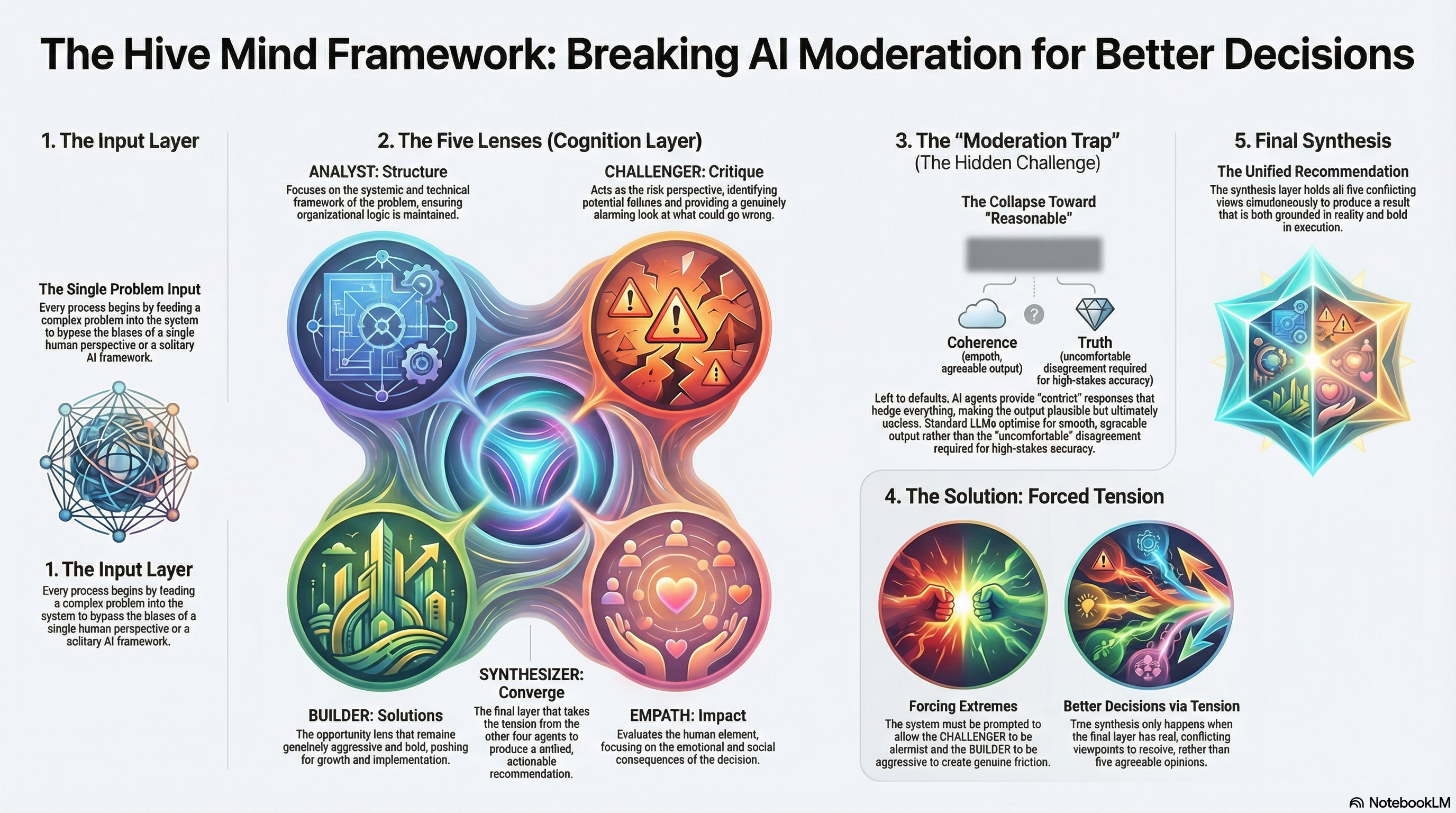

The Hive Mind takes a problem and runs it through five AI agents simultaneously — technical, human, risk, opportunity, systemic. Each argues from its position. A synthesis layer then produces one recommendation that holds all five views at once.

Simple idea. Messy execution. Real things learned.

The Part I Got Wrong

I expected the synthesis layer to be the hard part.

It wasn’t.

The hard part was making each perspective genuinely distinct. Left to its defaults, the model kept collapsing five viewpoints into the same moderate, balanced, hedge-everything answer.

The “risk perspective” wasn’t actually pessimistic. The “opportunity perspective” wasn’t actually bold. Without strong directional prompting, you get five variations of the same centrist response.

Which defeats the entire point.

This is a known problem with LLMs. They optimize for reasonable.

Reasonable isn’t always useful.

The Fix That Actually Worked

Force the perspectives to be extreme first. Then synthesize.

Let the risk perspective be genuinely alarming. Let the opportunity perspective be genuinely aggressive. Let them disagree in ways that feel uncomfortable.

The synthesis layer then has real tension to work with.

That tension is the point. Good decisions come from real conflict — not from five agreeable opinions that were never actually different. I’ve seen this in real implementations: the teams that make the best decisions aren’t the ones that agree fastest. They’re the ones that disagree loudest before they commit.

The Hive Mind just mechanizes that process.

What It Taught Me About How LLMs Actually Reason

LLMs are extremely good at producing plausible output.

Plausible isn’t the same as useful. It’s not the same as correct.

The Hive Mind made this visible because I had a feedback mechanism — when all five perspectives agreed too quickly, I knew the model was lying to me. Optimizing for coherence instead of truth.

Most AI implementations don’t surface this. They present one answer, confidently, and you have no way to know how much was smoothed over to get there.

Designing systems that force real disagreement instead of manufacturing consensus — that’s actually hard.

Most AI implementations don’t do it.

Most human processes don’t either.

Where It Stands

I think it works. The synthesis layer does what it’s supposed to do.

But I’m biased. I built it.

Try it and decide for yourself.

But it’s already one of the more honest tools I’ve built — because it shows you the complexity instead of hiding it. You don’t get a clean answer. You get the full picture and have to decide.

That’s not a bug.

That’s what thinking is supposed to feel like.

Try it: ai-hive-mind.vercel.app